128K

0.294

0.441

Chat

Active

DeepSeek-V3.1

It excels in low-latency chat, code generation, and agent workflows, delivering scalable performance for developers and enterprises.

DeepSeek V3.1 is a high-efficiency hybrid AI model optimized for fast, direct responses without deep reasoning, supporting extensive multimodal inputs and large context windows.

DeepSeek-V3.1 is a next-generation hybrid language model built by DeepSeek AI. It runs on a Mixture-of-Experts (MoE) transformer architecture, which means it routes each inference request through a small, relevant subset of its parameters, keeping latency low and compute costs lean without sacrificing output quality.

The Chat variant specifically operates in non-thinking mode. Rather than working through multi-step reasoning chains, it returns direct, high-quality answers with minimal overhead. That design choice makes DeepSeek-V3.1 a natural fit for applications where speed is a first-class requirement: customer-facing chatbots, CI/CD code automation pipelines, real-time data extraction, and any agentic system calling external tools in a tight loop.

The non-thinking Chat mode skips deliberation overhead entirely. You get crisp answers fast — ideal for high-throughput production environments.

First-class support for structured function calls, code agents, and search agents. Build complex agentic pipelines without bolting on workarounds.

MoE layers activate only the parameters relevant to each token. The result: competitive performance at a significantly lower compute footprint than dense alternatives.

Extended multilingual training means you can serve global users from a single model deployment without separate fine-tuning per language.

Every parameter below is relevant to planning your integration. Understanding them upfront helps you size context windows correctly, avoid truncation surprises, and choose the right sampling settings for your use case.

The model's non-thinking, high-speed profile makes it particularly strong in scenarios where fast, accurate, and contextually aware output matters more than deep step-by-step deliberation.

Write, debug, and refactor code across Python, TypeScript, Go, Rust, and more. The 128K context lets the model process full files and multi-file diffs in a single request, no chunking required.

DeepSeek-V3.1 ships with built-in support for structured tool calls, code execution agents, and search agents. Wire it into LangChain, AutoGen, or your own orchestration layer for autonomous task execution.

High response speed and multilingual support across 100+ languages make this a strong foundation for global support bots that need consistent, coherent replies without visible latency.

Feed entire reports, contracts, or research papers into the 128K window and extract structured summaries, comparisons, or action items. No pre-chunking pipelines, no retrieval workarounds for moderate-length docs.

Combine image and text inputs to process charts, screenshots, or scanned documents alongside natural language queries, useful for financial dashboards, compliance review, and visual data extraction.

Build responsive, multi-turn educational tools that explain concepts clearly, adapt to learner level, and handle follow-up questions, without the reasoning overhead of a more expensive model.

• 1М input tokens: $0.294

• 1М output tokens: $0.441

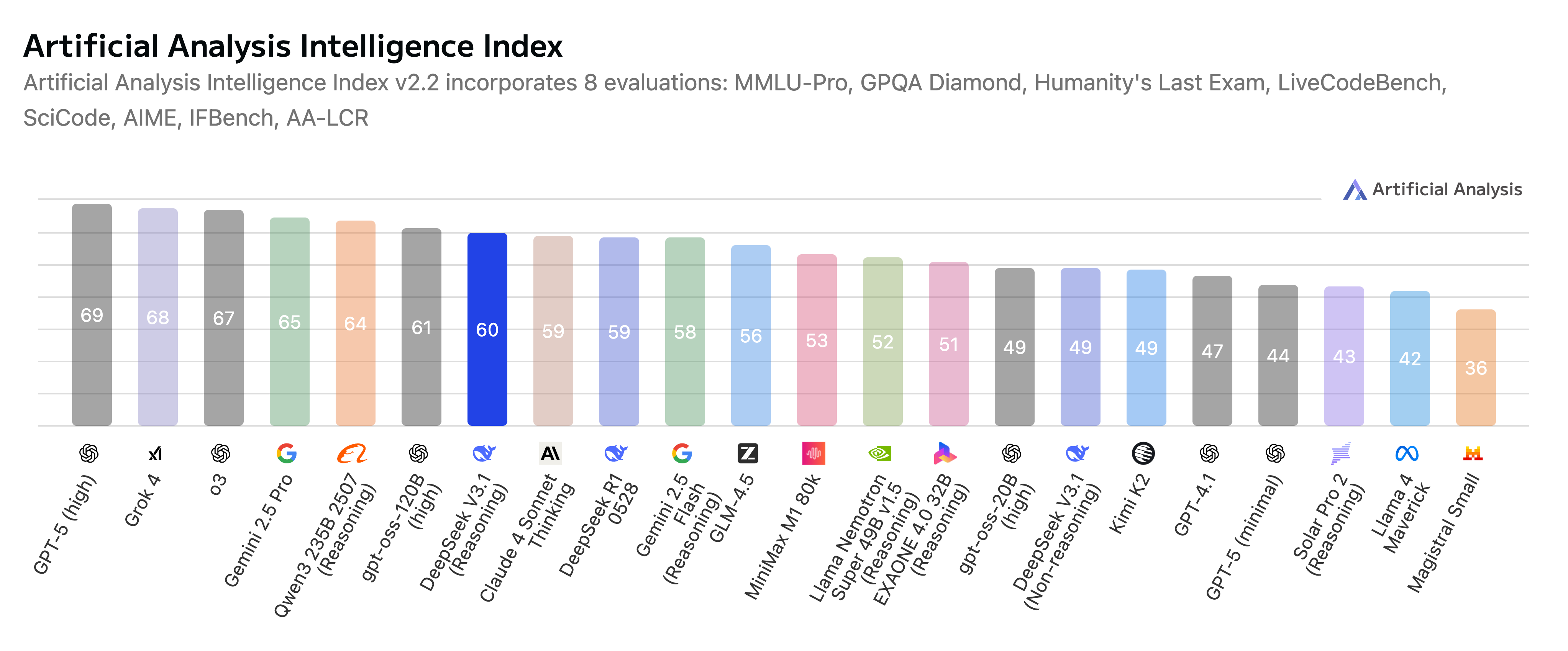

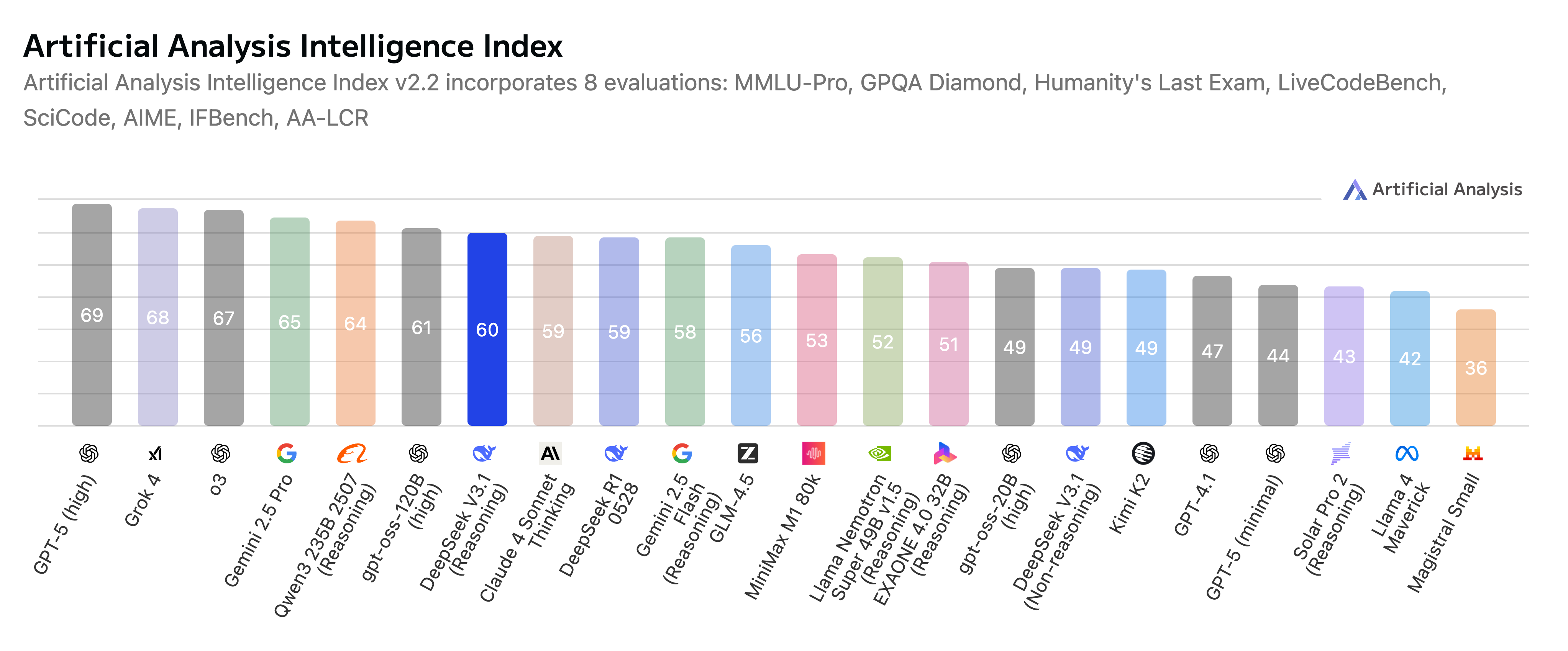

Knowing where a model excels (and where it doesn't) saves you from using an expensive sledgehammer for a finishing nail. Here's how DeepSeek-V3.1 stacks up against the main alternatives you're likely to consider.

V3.1 is a meaningful step up from its predecessor. Inference speed has improved by around 30%, multimodal alignment accuracy is noticeably better, and handling of low-resource languages is sharper. For anyone already on DeepSeek V3, migrating to V3.1 is an easy upgrade — the API interface is identical.

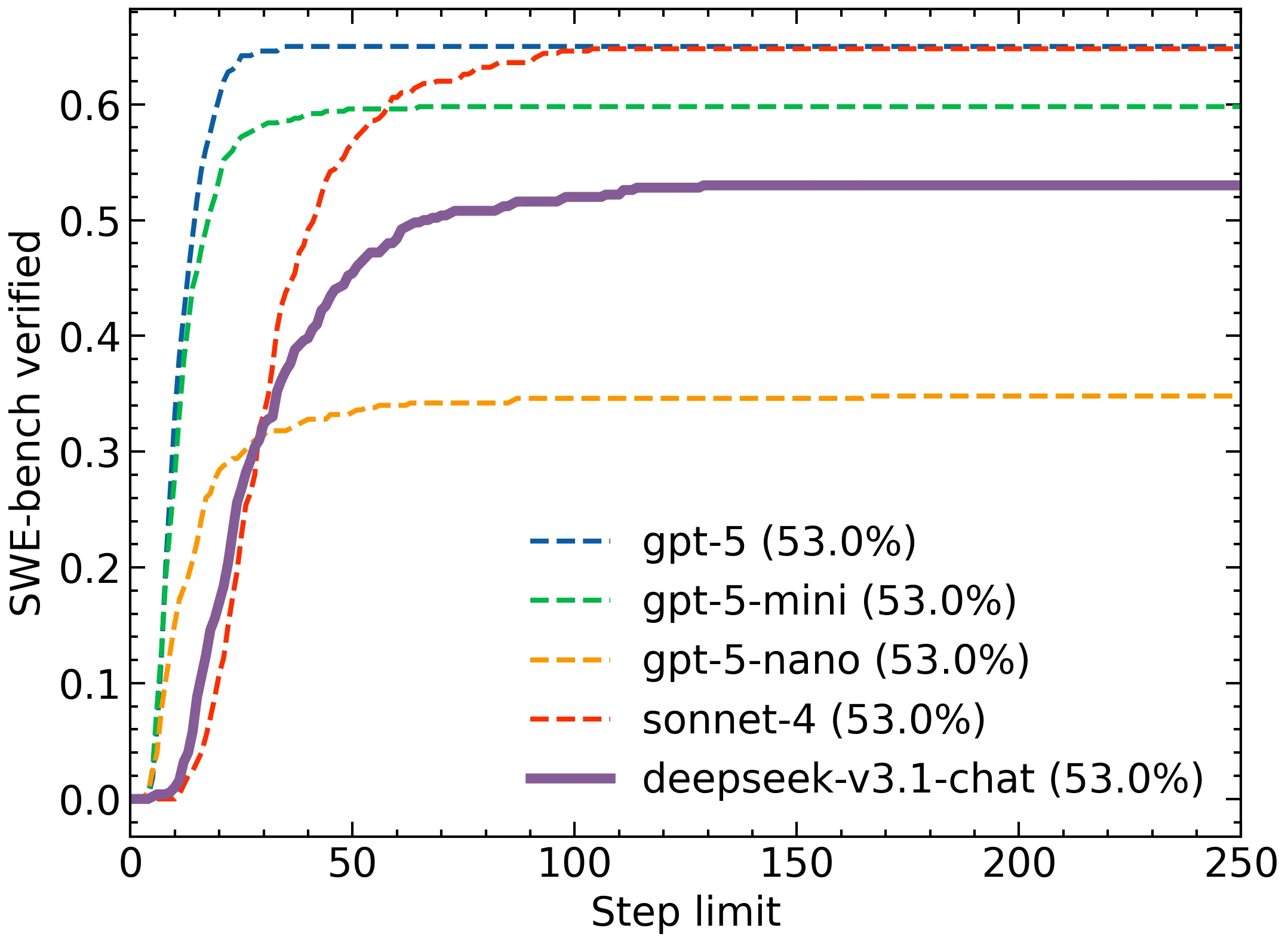

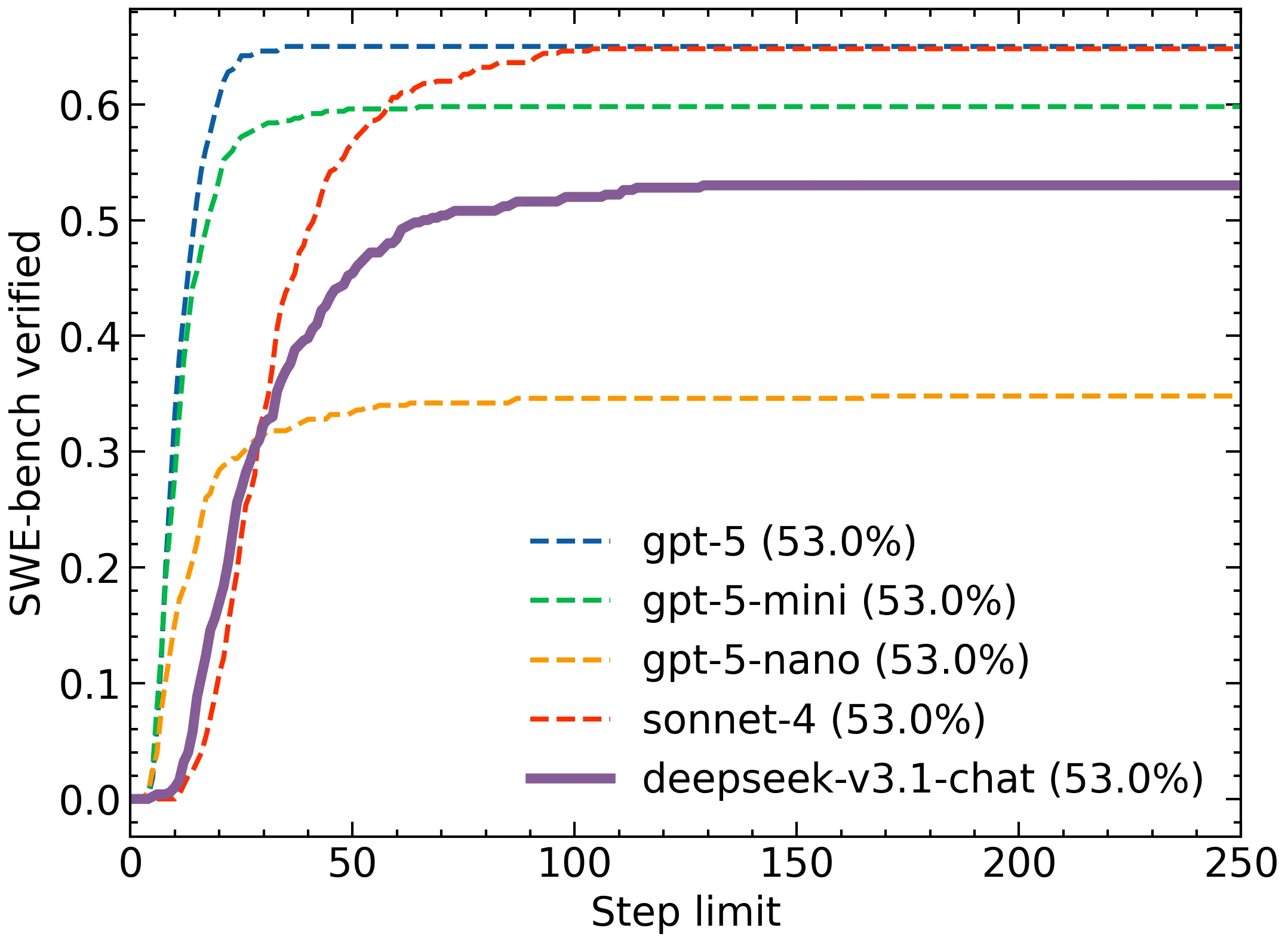

GPT-4.1 is OpenAI's code-optimized workhorse and a fine choice if you're deep in the OpenAI ecosystem. DeepSeek-V3.1 offers a different tradeoff: the MoE architecture delivers better resource efficiency, and its visual-textual coherence is stronger for multimodal tasks. For pure code generation, quality is competitive at roughly 7× lower cost.

GPT-5 is a more powerful model with a 400K context window and broader multimodal breadth. For tasks that genuinely need that scale, it's the right call. But DeepSeek-V3.1 covers the majority of real-world production workloads at a fraction of the price — and through AI/ML API you can mix both under a single account, routing tasks to the right model without switching integrations.

DeepSeek-V3.1 is a next-generation hybrid language model built by DeepSeek AI. It runs on a Mixture-of-Experts (MoE) transformer architecture, which means it routes each inference request through a small, relevant subset of its parameters, keeping latency low and compute costs lean without sacrificing output quality.

The Chat variant specifically operates in non-thinking mode. Rather than working through multi-step reasoning chains, it returns direct, high-quality answers with minimal overhead. That design choice makes DeepSeek-V3.1 a natural fit for applications where speed is a first-class requirement: customer-facing chatbots, CI/CD code automation pipelines, real-time data extraction, and any agentic system calling external tools in a tight loop.

The non-thinking Chat mode skips deliberation overhead entirely. You get crisp answers fast — ideal for high-throughput production environments.

First-class support for structured function calls, code agents, and search agents. Build complex agentic pipelines without bolting on workarounds.

MoE layers activate only the parameters relevant to each token. The result: competitive performance at a significantly lower compute footprint than dense alternatives.

Extended multilingual training means you can serve global users from a single model deployment without separate fine-tuning per language.

Every parameter below is relevant to planning your integration. Understanding them upfront helps you size context windows correctly, avoid truncation surprises, and choose the right sampling settings for your use case.

The model's non-thinking, high-speed profile makes it particularly strong in scenarios where fast, accurate, and contextually aware output matters more than deep step-by-step deliberation.

Write, debug, and refactor code across Python, TypeScript, Go, Rust, and more. The 128K context lets the model process full files and multi-file diffs in a single request, no chunking required.

DeepSeek-V3.1 ships with built-in support for structured tool calls, code execution agents, and search agents. Wire it into LangChain, AutoGen, or your own orchestration layer for autonomous task execution.

High response speed and multilingual support across 100+ languages make this a strong foundation for global support bots that need consistent, coherent replies without visible latency.

Feed entire reports, contracts, or research papers into the 128K window and extract structured summaries, comparisons, or action items. No pre-chunking pipelines, no retrieval workarounds for moderate-length docs.

Combine image and text inputs to process charts, screenshots, or scanned documents alongside natural language queries, useful for financial dashboards, compliance review, and visual data extraction.

Build responsive, multi-turn educational tools that explain concepts clearly, adapt to learner level, and handle follow-up questions, without the reasoning overhead of a more expensive model.

• 1М input tokens: $0.294

• 1М output tokens: $0.441

Knowing where a model excels (and where it doesn't) saves you from using an expensive sledgehammer for a finishing nail. Here's how DeepSeek-V3.1 stacks up against the main alternatives you're likely to consider.

V3.1 is a meaningful step up from its predecessor. Inference speed has improved by around 30%, multimodal alignment accuracy is noticeably better, and handling of low-resource languages is sharper. For anyone already on DeepSeek V3, migrating to V3.1 is an easy upgrade — the API interface is identical.

GPT-4.1 is OpenAI's code-optimized workhorse and a fine choice if you're deep in the OpenAI ecosystem. DeepSeek-V3.1 offers a different tradeoff: the MoE architecture delivers better resource efficiency, and its visual-textual coherence is stronger for multimodal tasks. For pure code generation, quality is competitive at roughly 7× lower cost.

GPT-5 is a more powerful model with a 400K context window and broader multimodal breadth. For tasks that genuinely need that scale, it's the right call. But DeepSeek-V3.1 covers the majority of real-world production workloads at a fraction of the price — and through AI/ML API you can mix both under a single account, routing tasks to the right model without switching integrations.