1M

0.65

3.9

Chat

Active

Gemini 3 Flash

Gemini 3 Flash Preview is Google’s fast multimodal LLM API for agents, coding, and docs with pro-level control.

Gemini 3 Flash Preview is Google’s low-latency, high-throughput multimodal LLM API built for agentic workflows, coding assistants, and document intelligence without giving up Pro-grade reasoning controls.

Gemini 3 Flash (Preview) is built to deliver frontier-ish capability at Flash speed—great for agentic workflows, coding helpers, document intelligence, and high-volume production apps where latency and cost matter as much as quality. It’s rolling out across Gemini API (AI Studio), Vertex AI, and other Google dev surfaces, and it’s also becoming the default in parts of the consumer Gemini experience.

Architecture: Transformer-based generative multimodal LLM

Context Length: Up to 1M tokens input / 64K tokens output

Capabilities: Text + multimodal understanding (images, audio, video, PDFs), structured outputs, tool/function calling, agent-friendly behaviors.

Knowledge cutoff: Jan 2025

Inference features: Reasoning (“thinking”) is supported, and output pricing explicitly includes thinking tokens in Gemini API pricing.

Speed / throughput: Independent pre-release testing reported ~218 output tokens/sec for Gemini 3 Flash Preview (fast enough for real-time-ish agent loops and high-QPS backends).

Quality vs prior Flash: Google/DeepMind positions Flash as meaningfully stronger than previous Flash generations for difficult extraction and doc tasks.

Gemini 3 Flash is a strong default when you need:

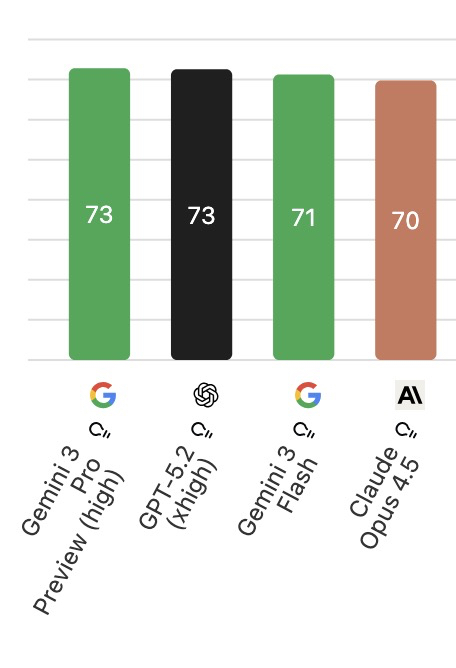

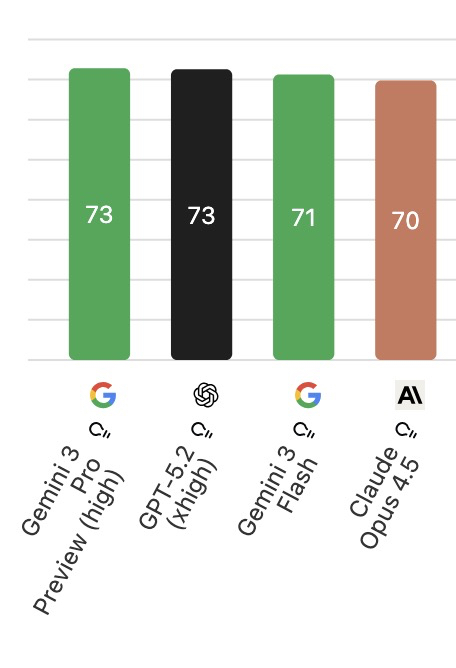

Gemini 3 Pro is the “reasoning-first” flagship, while Flash targets similar agentic/coding workflows but optimized for cost/latency.

Gemini 3 Flash is positioned as a major upgrade (replacing the prior default Flash), aiming for more detailed answers while staying fast.

GPT-5.2 is a reasoning/coding-first flagship that’s typically chosen when you want the most dependable multi-step analysis, strong code correctness, and consistent “final answer” polish in complex, real-world workflows. Gemini 3 Flash (Preview) targets many of the same agentic/coding and document-intelligence use cases, but it’s tuned primarily for low latency and high throughput, making it a more “speed-first” default when responsiveness matters. The other big practical difference is context behavior: Flash emphasizes very long input context for feeding large corpora or long documents, while GPT-5.2 emphasizes high-quality long-form reasoning and large outputs for deeply structured work.

Gemini 3 Flash applies policy-based safety filtering and can block or stop generations for restricted categories. Guardrails can feel stricter on edge-case prompts (especially around sensitive topics), and long-context or high “thinking” settings may increase latency and token usage, so production apps usually need fallback prompts and clear UX for refusals.

Gemini 3 Flash (Preview) is built to deliver frontier-ish capability at Flash speed—great for agentic workflows, coding helpers, document intelligence, and high-volume production apps where latency and cost matter as much as quality. It’s rolling out across Gemini API (AI Studio), Vertex AI, and other Google dev surfaces, and it’s also becoming the default in parts of the consumer Gemini experience.

Architecture: Transformer-based generative multimodal LLM

Context Length: Up to 1M tokens input / 64K tokens output

Capabilities: Text + multimodal understanding (images, audio, video, PDFs), structured outputs, tool/function calling, agent-friendly behaviors.

Knowledge cutoff: Jan 2025

Inference features: Reasoning (“thinking”) is supported, and output pricing explicitly includes thinking tokens in Gemini API pricing.

Speed / throughput: Independent pre-release testing reported ~218 output tokens/sec for Gemini 3 Flash Preview (fast enough for real-time-ish agent loops and high-QPS backends).

Quality vs prior Flash: Google/DeepMind positions Flash as meaningfully stronger than previous Flash generations for difficult extraction and doc tasks.

Gemini 3 Flash is a strong default when you need:

Gemini 3 Pro is the “reasoning-first” flagship, while Flash targets similar agentic/coding workflows but optimized for cost/latency.

Gemini 3 Flash is positioned as a major upgrade (replacing the prior default Flash), aiming for more detailed answers while staying fast.

GPT-5.2 is a reasoning/coding-first flagship that’s typically chosen when you want the most dependable multi-step analysis, strong code correctness, and consistent “final answer” polish in complex, real-world workflows. Gemini 3 Flash (Preview) targets many of the same agentic/coding and document-intelligence use cases, but it’s tuned primarily for low latency and high throughput, making it a more “speed-first” default when responsiveness matters. The other big practical difference is context behavior: Flash emphasizes very long input context for feeding large corpora or long documents, while GPT-5.2 emphasizes high-quality long-form reasoning and large outputs for deeply structured work.

Gemini 3 Flash applies policy-based safety filtering and can block or stop generations for restricted categories. Guardrails can feel stricter on edge-case prompts (especially around sensitive topics), and long-context or high “thinking” settings may increase latency and token usage, so production apps usually need fallback prompts and clear UX for refusals.