Video

Active

HunyuanVideo Foley

HunyuanVideo-Foley is a multimodal AI model that generates high-quality, synchronized audio directly from video input.

Designed for scalability, it streamlines audio production across film, gaming, and social media content.

HunyuanVideo-Foley is a powerful AI model that generates realistic sound effects straight from video. Created by Tencent, it eliminates the need for manual Foley work by automatically producing audio that matches motion, timing, and scene context.

Instead of treating audio as a separate step, the model builds it directly into the video experience. The output feels aligned, responsive, and ready to use, whether you're working on short-form content or full-scale production.

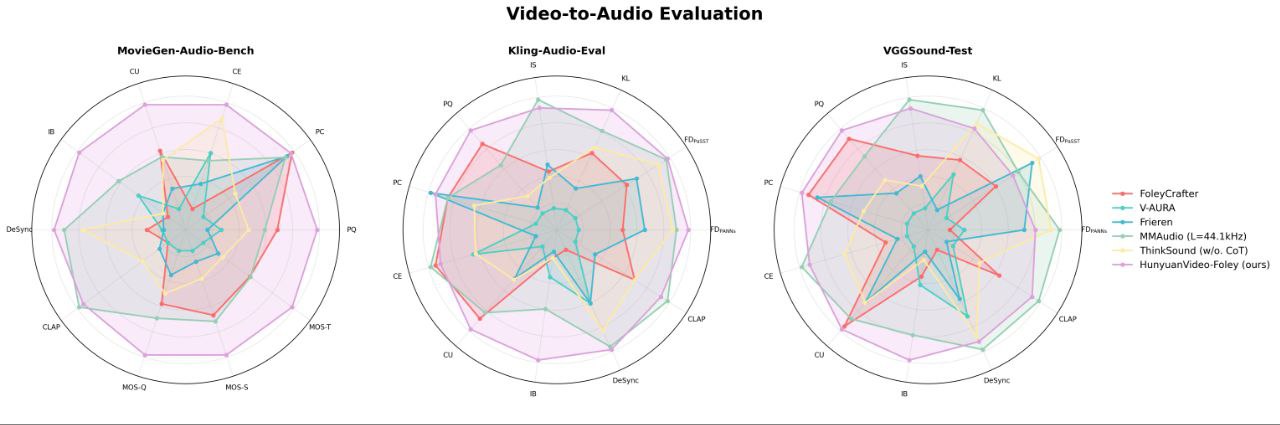

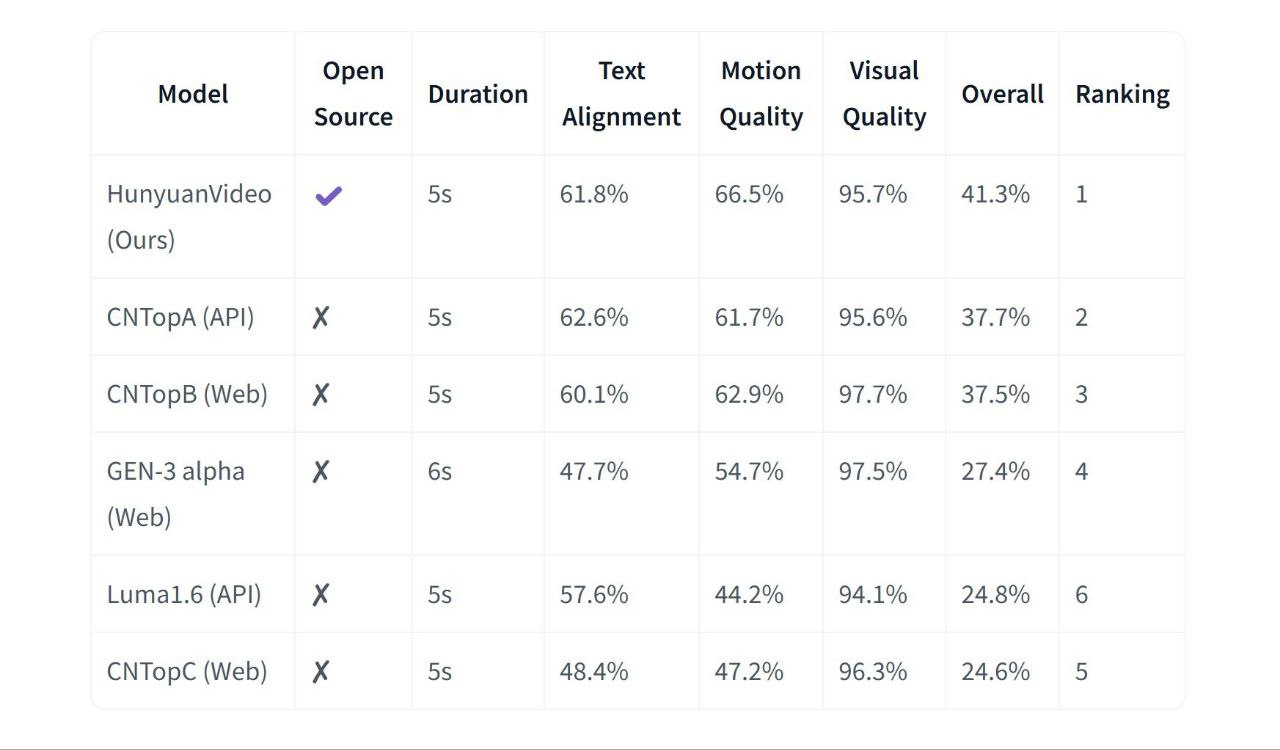

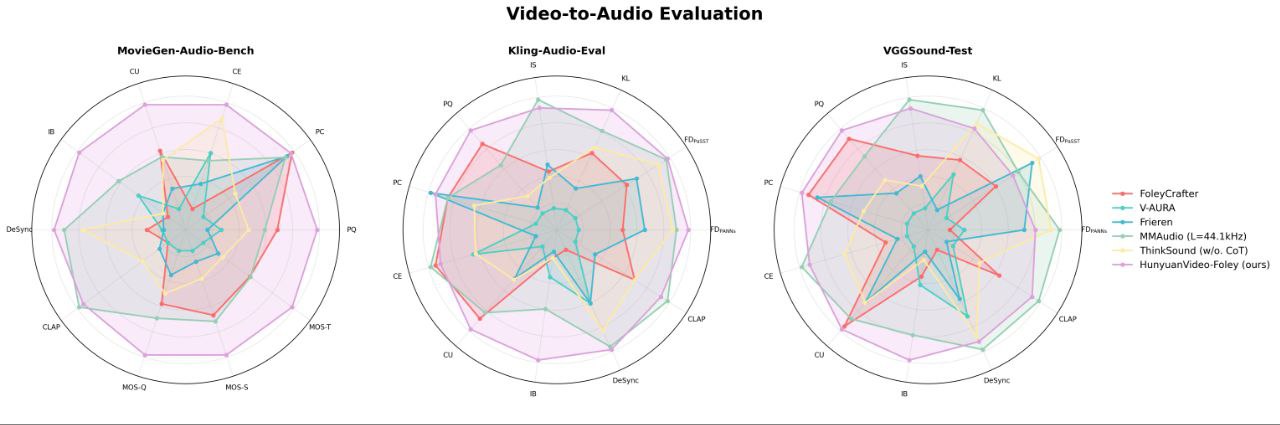

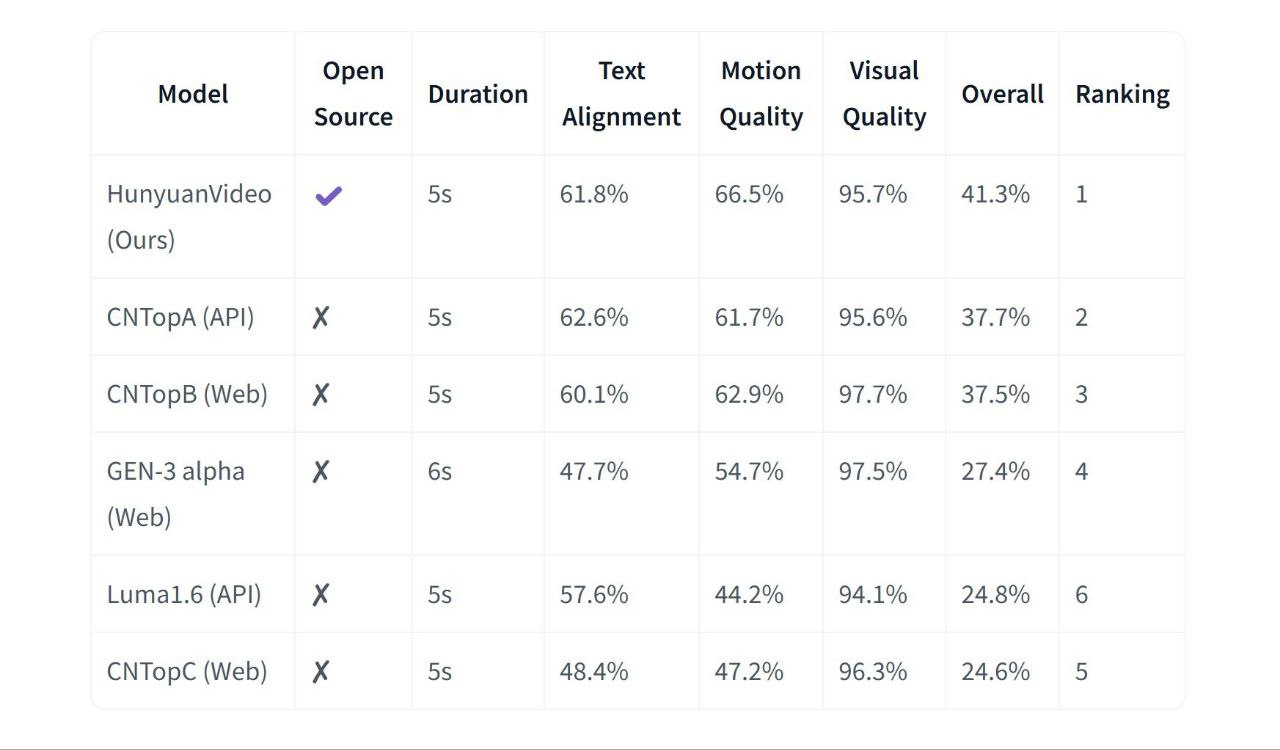

In comprehensive benchmarks including Kling-Audio-Eval, VGGSound-Test, and MovieGen-Audio-Bench, HunyuanVideo Foley consistently outperforms competitors like FoleyCrafter, MMAudio, V-AURA, and ThinkSound.

It consistently leads in audio fidelity, semantic alignment between visuals and sound, temporal synchronization, and distribution matching metrics, outperforming all well-known open-source models in these areas. According to both objective evaluations and professional human assessments. The model showcases robust and stable performance across a wide variety of video content and audio scenarios, confirming its reliability in diverse real-world applications.

At the heart of HunyuanVideo-Foley lies a diffusion-based transformer that operates across multiple modalities. It encodes video frames into structured representations, aligns them with optional textual prompts, and generates audio through a latent diffusion process.

This architecture enables the model to maintain both acoustic realism and semantic coherence, ensuring that generated sounds match not just what is visible, but what is happening.

One of the defining features of the model is its ability to synchronize sound with visual events at a fine-grained level. Rather than producing loosely aligned audio, it captures timing details such as motion speed, impact moments, and environmental transitions.

This precision is especially noticeable in scenes involving physical interaction, where even slight desynchronization would break immersion.

To maintain consistency across modalities, the model uses a dedicated alignment framework that harmonizes visual, textual, and audio embeddings. This reduces common issues like mismatched sound cues or overly dominant audio patterns, resulting in a more balanced and believable output.

The model accepts raw video as input and can optionally incorporate textual descriptions to guide the output. It then produces a continuous audio track that includes environmental sounds, interactions, and subtle background elements.

In a simple walking scene, for example, it does not just generate footsteps, it also introduces ambient noise, surface-dependent variations, and spatial depth, creating a richer auditory experience.

HunyuanVideo-Foley performs well across a wide range of visual domains. It can handle cinematic footage, animated sequences, and user-generated content with minimal adjustments. This flexibility makes it suitable for both high-end production and rapid prototyping workflows.

Traditional Foley production is labor-intensive, often requiring dedicated recording sessions, specialized equipment, and extensive post-processing. HunyuanVideo-Foley streamlines this process into a single inference step, dramatically reducing turnaround time.

Instead of manually crafting each sound layer, creators can focus on refining the overall experience, using the model as a foundation rather than a replacement for creativity.

HunyuanVideo-Foley stands out for its ability to produce synchronized, high-quality audio that feels organically tied to visual content. Its multimodal design allows it to capture subtle contextual cues, making outputs more expressive than those generated by rule-based or library-driven systems.

At the same time, performance depends on input quality and computational resources. Complex scenes may require more processing time, and achieving precise creative control can involve iterative refinement, especially when combining video and text inputs.

HunyuanVideo-Foley is a powerful AI model that generates realistic sound effects straight from video. Created by Tencent, it eliminates the need for manual Foley work by automatically producing audio that matches motion, timing, and scene context.

Instead of treating audio as a separate step, the model builds it directly into the video experience. The output feels aligned, responsive, and ready to use, whether you're working on short-form content or full-scale production.

In comprehensive benchmarks including Kling-Audio-Eval, VGGSound-Test, and MovieGen-Audio-Bench, HunyuanVideo Foley consistently outperforms competitors like FoleyCrafter, MMAudio, V-AURA, and ThinkSound.

It consistently leads in audio fidelity, semantic alignment between visuals and sound, temporal synchronization, and distribution matching metrics, outperforming all well-known open-source models in these areas. According to both objective evaluations and professional human assessments. The model showcases robust and stable performance across a wide variety of video content and audio scenarios, confirming its reliability in diverse real-world applications.

At the heart of HunyuanVideo-Foley lies a diffusion-based transformer that operates across multiple modalities. It encodes video frames into structured representations, aligns them with optional textual prompts, and generates audio through a latent diffusion process.

This architecture enables the model to maintain both acoustic realism and semantic coherence, ensuring that generated sounds match not just what is visible, but what is happening.

One of the defining features of the model is its ability to synchronize sound with visual events at a fine-grained level. Rather than producing loosely aligned audio, it captures timing details such as motion speed, impact moments, and environmental transitions.

This precision is especially noticeable in scenes involving physical interaction, where even slight desynchronization would break immersion.

To maintain consistency across modalities, the model uses a dedicated alignment framework that harmonizes visual, textual, and audio embeddings. This reduces common issues like mismatched sound cues or overly dominant audio patterns, resulting in a more balanced and believable output.

The model accepts raw video as input and can optionally incorporate textual descriptions to guide the output. It then produces a continuous audio track that includes environmental sounds, interactions, and subtle background elements.

In a simple walking scene, for example, it does not just generate footsteps, it also introduces ambient noise, surface-dependent variations, and spatial depth, creating a richer auditory experience.

HunyuanVideo-Foley performs well across a wide range of visual domains. It can handle cinematic footage, animated sequences, and user-generated content with minimal adjustments. This flexibility makes it suitable for both high-end production and rapid prototyping workflows.

Traditional Foley production is labor-intensive, often requiring dedicated recording sessions, specialized equipment, and extensive post-processing. HunyuanVideo-Foley streamlines this process into a single inference step, dramatically reducing turnaround time.

Instead of manually crafting each sound layer, creators can focus on refining the overall experience, using the model as a foundation rather than a replacement for creativity.

HunyuanVideo-Foley stands out for its ability to produce synchronized, high-quality audio that feels organically tied to visual content. Its multimodal design allows it to capture subtle contextual cues, making outputs more expressive than those generated by rule-based or library-driven systems.

At the same time, performance depends on input quality and computational resources. Complex scenes may require more processing time, and achieving precise creative control can involve iterative refinement, especially when combining video and text inputs.