262К

1.56

7.8

Chat

Active

Qwen3-Max Instruct

Its non-thinking mode favors fast, direct instruction-following responses, making it highly practical for enterprise and developer use.

Qwen 3 Max Instruct sets a new benchmark for trillion-parameter language models, with massive context lengths, diverse language support, and cutting-edge performance in code and math tasks.

Qwen3-Max Instruct is Alibaba’s flagship large language model (LLM) boasting over 1 trillion parameters, officially released in early 2025. It represents a major advance in large-scale AI, with massive training data, advanced architecture, and strong capabilities especially in technical, code, and math tasks. This instruct-tuned variant is optimized for fast, direct instruction following without step-by-step reasoning.

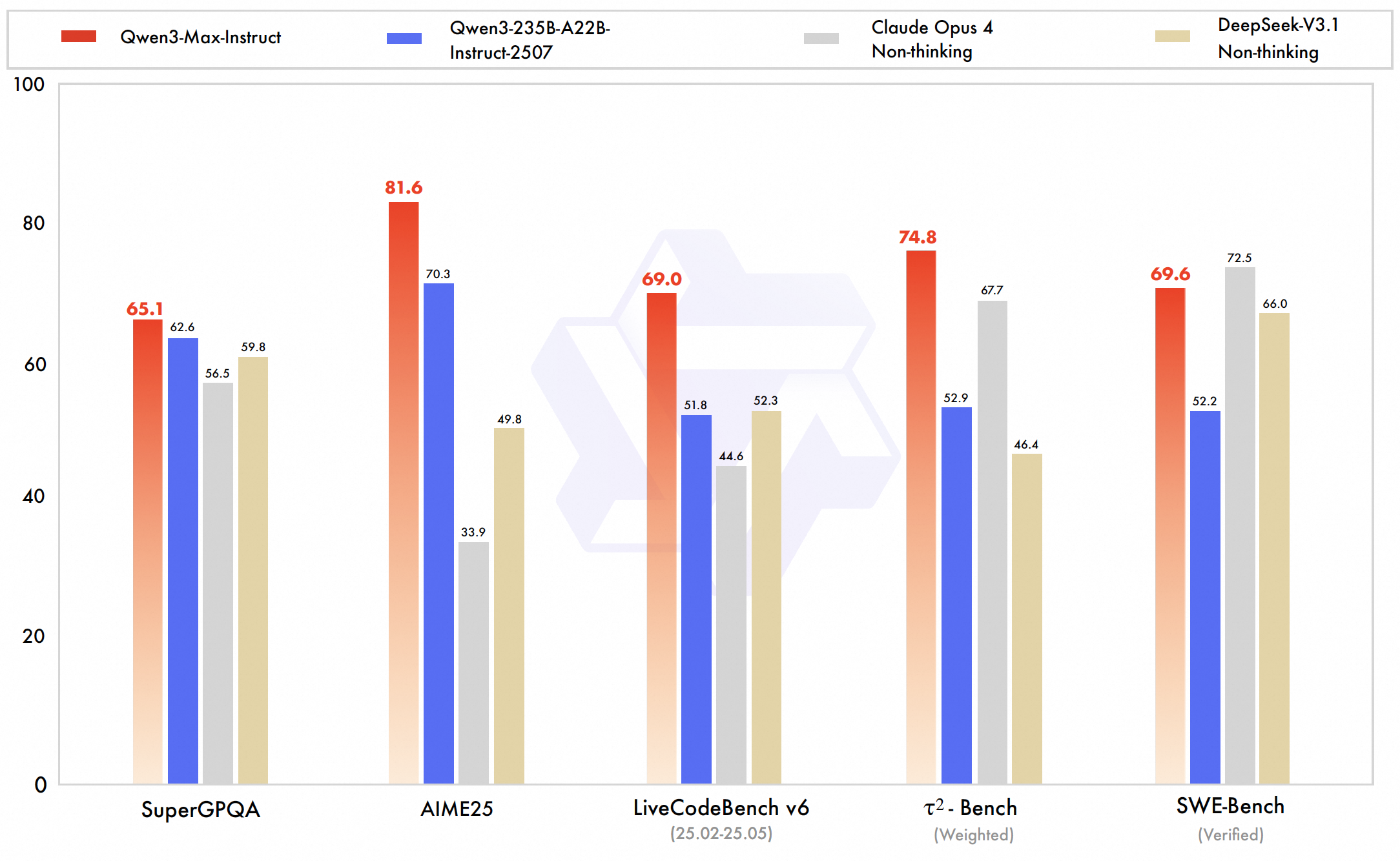

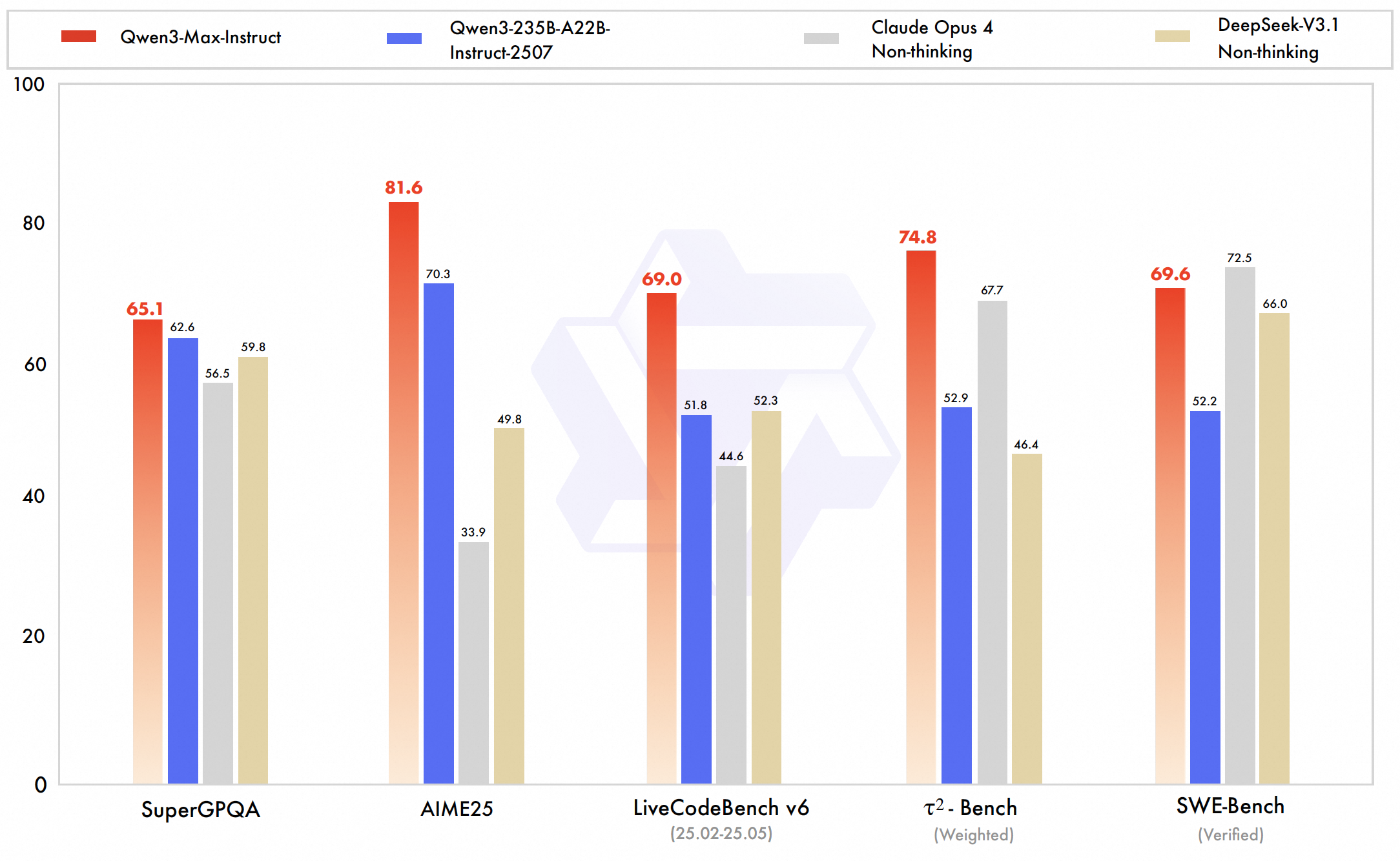

Qwen3-Max achieves world-class performance, especially excelling in code, mathematical reasoning, and technical domains. Alibaba’s internal and leaderboard testing show it outperforms or matches top AI models like GPT-5-Chat, Claude Opus 4, and DeepSeek V3.1 in multiple benchmarks.

vs GPT-5-Chat: Qwen-3-Max Instruct leads in coding benchmarks and agent capabilities, demonstrating strong performance on software engineering tasks. GPT-5-Chat, however, has a more mature ecosystem with multimodal features and wider commercial integrations. Qwen offers a much larger context window (~262k tokens) compared to GPT-5’s ~100k tokens.

vs Claude Opus 4: Qwen-3-Max surpasses Claude Opus 4 in agent and coding performance benchmarks while supporting a significantly larger context size. Claude excels in long-duration agent workflows and safety-focused behaviors. Both models are close in performance, with Claude having an edge in conservative code editing.

vs DeepSeek V3.1: Qwen-3-Max outperforms DeepSeek V3.1 on agent benchmarks like Tau2-Bench and coding challenges, showcasing stronger reasoning and tool-use ability. DeepSeek supports multimodal inputs but falls behind Qwen on extended context processing. Qwen’s training and scaling innovations give it a confirmed lead in large-scale tasks.

Accessible via AI/ML API. Documentation: available here.

Qwen3-Max Instruct is Alibaba’s flagship large language model (LLM) boasting over 1 trillion parameters, officially released in early 2025. It represents a major advance in large-scale AI, with massive training data, advanced architecture, and strong capabilities especially in technical, code, and math tasks. This instruct-tuned variant is optimized for fast, direct instruction following without step-by-step reasoning.

Qwen3-Max achieves world-class performance, especially excelling in code, mathematical reasoning, and technical domains. Alibaba’s internal and leaderboard testing show it outperforms or matches top AI models like GPT-5-Chat, Claude Opus 4, and DeepSeek V3.1 in multiple benchmarks.

vs GPT-5-Chat: Qwen-3-Max Instruct leads in coding benchmarks and agent capabilities, demonstrating strong performance on software engineering tasks. GPT-5-Chat, however, has a more mature ecosystem with multimodal features and wider commercial integrations. Qwen offers a much larger context window (~262k tokens) compared to GPT-5’s ~100k tokens.

vs Claude Opus 4: Qwen-3-Max surpasses Claude Opus 4 in agent and coding performance benchmarks while supporting a significantly larger context size. Claude excels in long-duration agent workflows and safety-focused behaviors. Both models are close in performance, with Claude having an edge in conservative code editing.

vs DeepSeek V3.1: Qwen-3-Max outperforms DeepSeek V3.1 on agent benchmarks like Tau2-Bench and coding challenges, showcasing stronger reasoning and tool-use ability. DeepSeek supports multimodal inputs but falls behind Qwen on extended context processing. Qwen’s training and scaling innovations give it a confirmed lead in large-scale tasks.

Accessible via AI/ML API. Documentation: available here.